Great thread, I'm playing catchup here. Gregg's buzzword takedown should be printed on a plaque and hung in every vendor office (my company included).

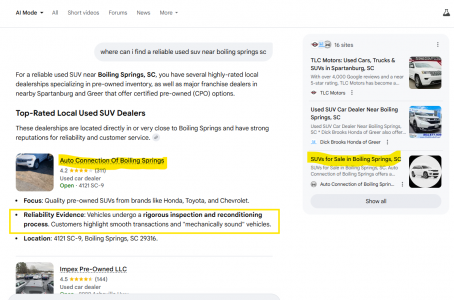

The ground floor comments are spot on. We're in here debating AI-ready VDPs and RAG pipelines while most dealers can't tell you if their NAP is consistent across 50 directories. The majority of the time I talk to dealers about SEO their GBP is not optimized, just claimed and abandoned like a New Year's gym membership. Local citations a mess. Core Web Vitals failing. Canonicals broken. Most aren't ready for "Make us show up in LLMs" and most don't want to stretch before a workout.

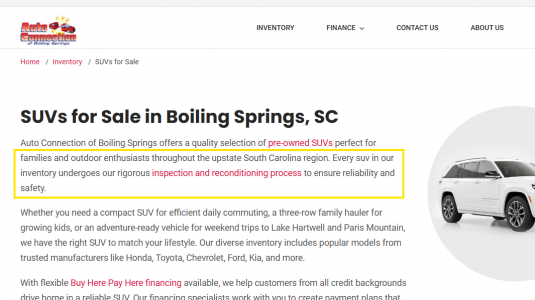

Once the basics are actually handled, content is where leverage lives. We use Hrizn with our clients. Best content OS in the space and I'm not being polite or pitchy. Their Dealer DNA components are the real deal. They're not generating another soulless Silverado blog post that reads like it was written by a chatbot with a thesaurus. They're building content tied to YOUR store, YOUR market, YOUR identity. With the March spam update live, every dealer running copy-paste content across 12 rooftops has gotta be rethinkin' their content strategy.

Where I'd really push this thread selfishly... the biggest problem in automotive isn't content or infrastructure. It's that every dealership's context lives in the GM's gut and gets distributed via vibes and monthly vendor phone calls (if they make them). 25+ SaaS platforms all operating in silos. Website vendor gets one story. Ad agency gets another. BDC is out there freelancing. We've been running on gut-distributed context for 30 years and calling it a digital strategy.

What could soon replace gut is a context or intelligence syndication layer. Capture what makes the dealership unique, update in real time, and push it everywhere. The dealers who win next won't be the ones with the most tools. They'll be the ones whose intelligence is actually organized and accessible instead of trapped in one person's head or gut. There are tons of providers building this layer into THEIR applications. To me, that's the same walled garden problem we've struggled with in this industry for the last 25 years. Every vendor wants to be the brain. Nobody wants to be the nervous system. Content, Ads, comms could all be leverage and operate harmoniously.

What's missing is a single context engine that syndicates intelligence out to every provider AND pulls the invisible stuff back in. Training data. Cross-platform comms. API usage and cost. Inventory. The operational signal that's actually driving output and nobody's monitoring because it's buried across 30 logins nobody opens. Who knows. This is moving so fast it's tough to keep up.

The ground floor comments are spot on. We're in here debating AI-ready VDPs and RAG pipelines while most dealers can't tell you if their NAP is consistent across 50 directories. The majority of the time I talk to dealers about SEO their GBP is not optimized, just claimed and abandoned like a New Year's gym membership. Local citations a mess. Core Web Vitals failing. Canonicals broken. Most aren't ready for "Make us show up in LLMs" and most don't want to stretch before a workout.

Once the basics are actually handled, content is where leverage lives. We use Hrizn with our clients. Best content OS in the space and I'm not being polite or pitchy. Their Dealer DNA components are the real deal. They're not generating another soulless Silverado blog post that reads like it was written by a chatbot with a thesaurus. They're building content tied to YOUR store, YOUR market, YOUR identity. With the March spam update live, every dealer running copy-paste content across 12 rooftops has gotta be rethinkin' their content strategy.

Where I'd really push this thread selfishly... the biggest problem in automotive isn't content or infrastructure. It's that every dealership's context lives in the GM's gut and gets distributed via vibes and monthly vendor phone calls (if they make them). 25+ SaaS platforms all operating in silos. Website vendor gets one story. Ad agency gets another. BDC is out there freelancing. We've been running on gut-distributed context for 30 years and calling it a digital strategy.

What could soon replace gut is a context or intelligence syndication layer. Capture what makes the dealership unique, update in real time, and push it everywhere. The dealers who win next won't be the ones with the most tools. They'll be the ones whose intelligence is actually organized and accessible instead of trapped in one person's head or gut. There are tons of providers building this layer into THEIR applications. To me, that's the same walled garden problem we've struggled with in this industry for the last 25 years. Every vendor wants to be the brain. Nobody wants to be the nervous system. Content, Ads, comms could all be leverage and operate harmoniously.

What's missing is a single context engine that syndicates intelligence out to every provider AND pulls the invisible stuff back in. Training data. Cross-platform comms. API usage and cost. Inventory. The operational signal that's actually driving output and nobody's monitoring because it's buried across 30 logins nobody opens. Who knows. This is moving so fast it's tough to keep up.